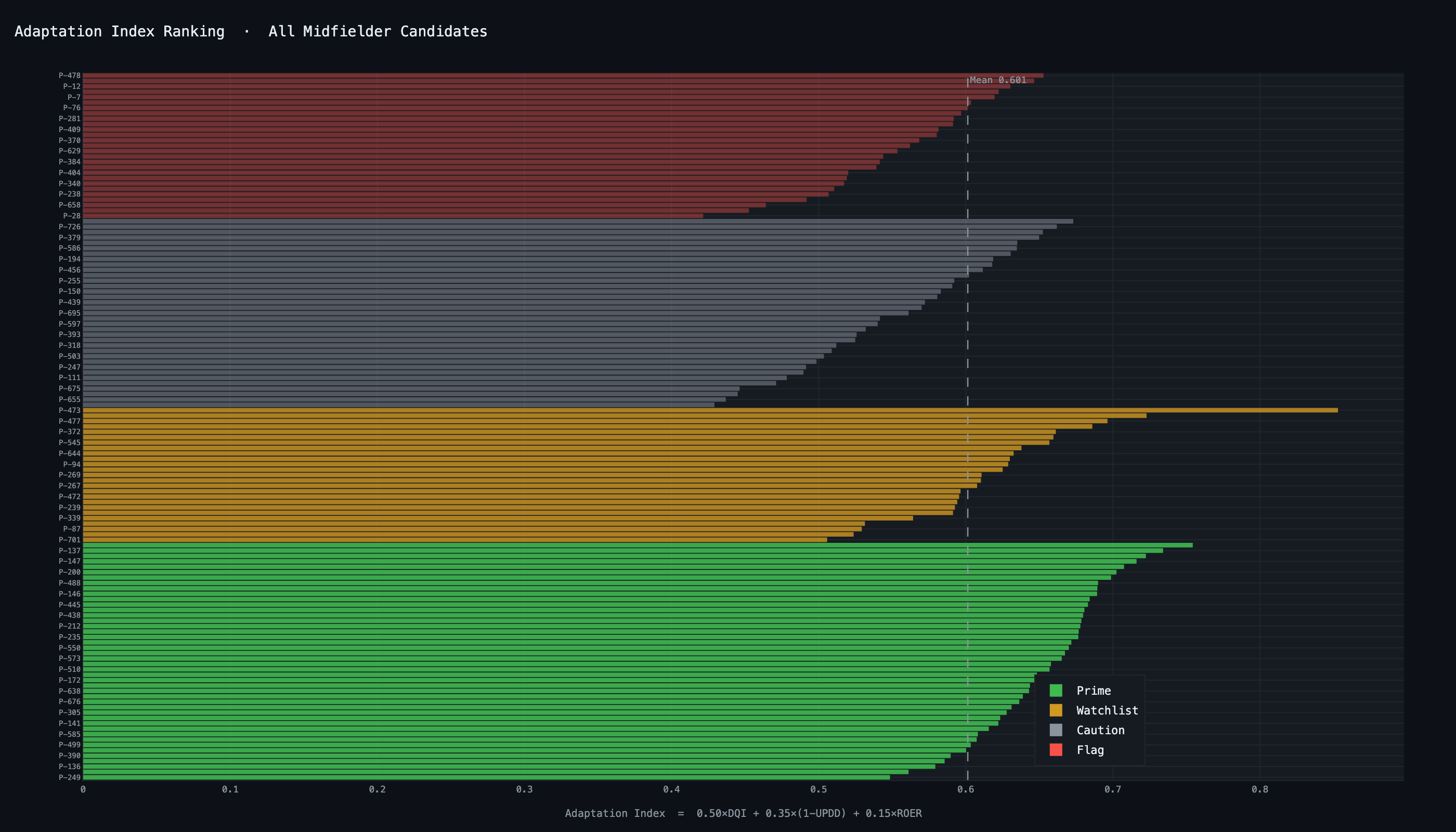

All metrics derived exclusively from Opta Vision feeds — no external data required. Every metric traces to an inspectable pass event. Pull any DQI score and watch the underlying decision. Pairwise Spearman correlations confirm each metric captures a genuinely independent cognitive dimension.

MA36 — Event data

~51K events · ~470K option records. passOption, pressure bands, xPass, xThreat, lineBreakingPass. Primary source for OVS, DQI, UPDD, POR.

MA58 — Off-ball runs

~27K off-ball runs. increasesAvailability, activeRun, relatedEvents linkage. Enables ROER and MA58 quality-stamp validation for 54 players.

MA60 + Shapes + Tracking

Phase-of-play labels for JSD. Shapes for role labelling. Tracking for spatial pressure calibration and pressure delta at origin/receiver.

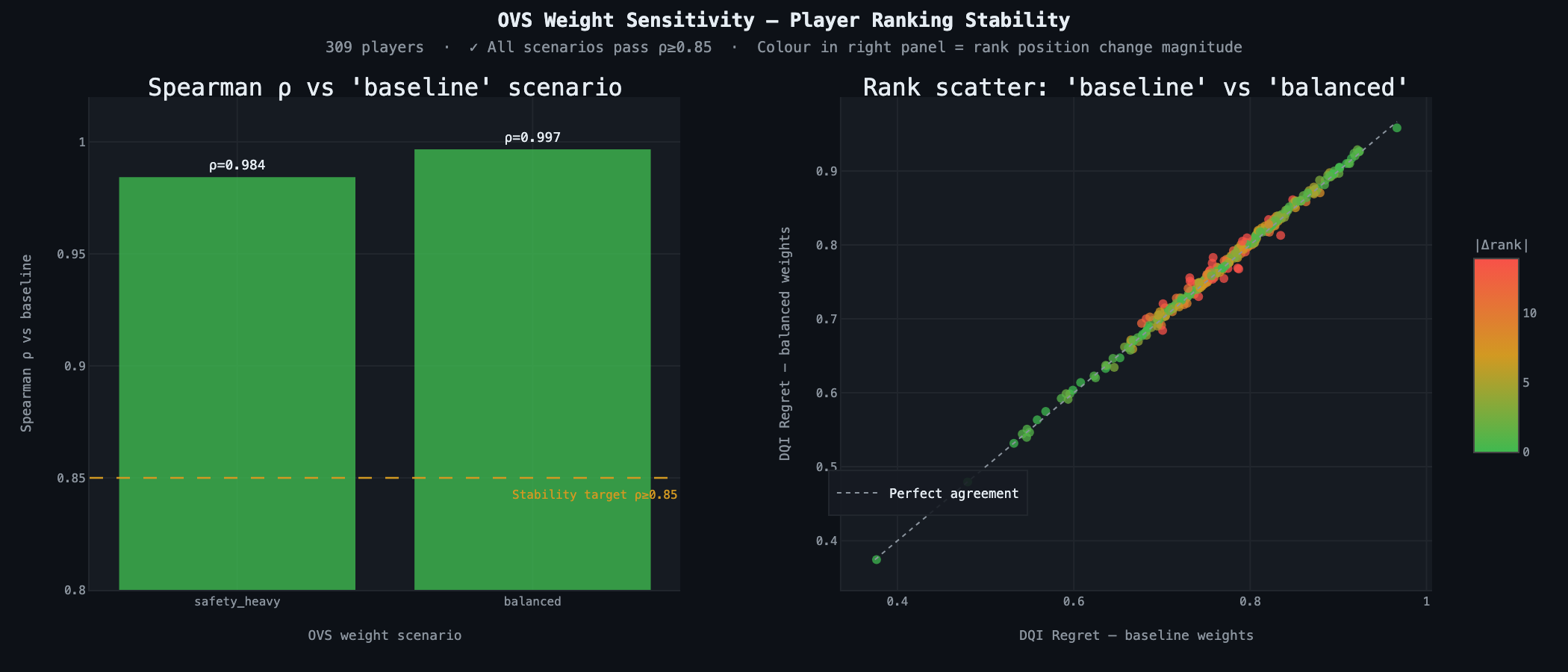

OVS — Foundation

Option Value Score

$$OVS_i = w_S \cdot xP_i + w_T \cdot xT_i$$

Baseline: w_S = 0.47 (safety), w_T = 0.53 (threat). Additive formulation validated across 470K options. Multiplicative collapses to 12% event survival (xT near zero); additive achieves 94%. Not a preference — a sample-size constraint. LBP boost (+0.15/+0.25) on chosen pass for POR only.

Three weight scenarios: baseline, safety_heavy, balanced. Player ranking Spearman ρ = 0.984–0.997 across all — effectively invariant to weight perturbation.

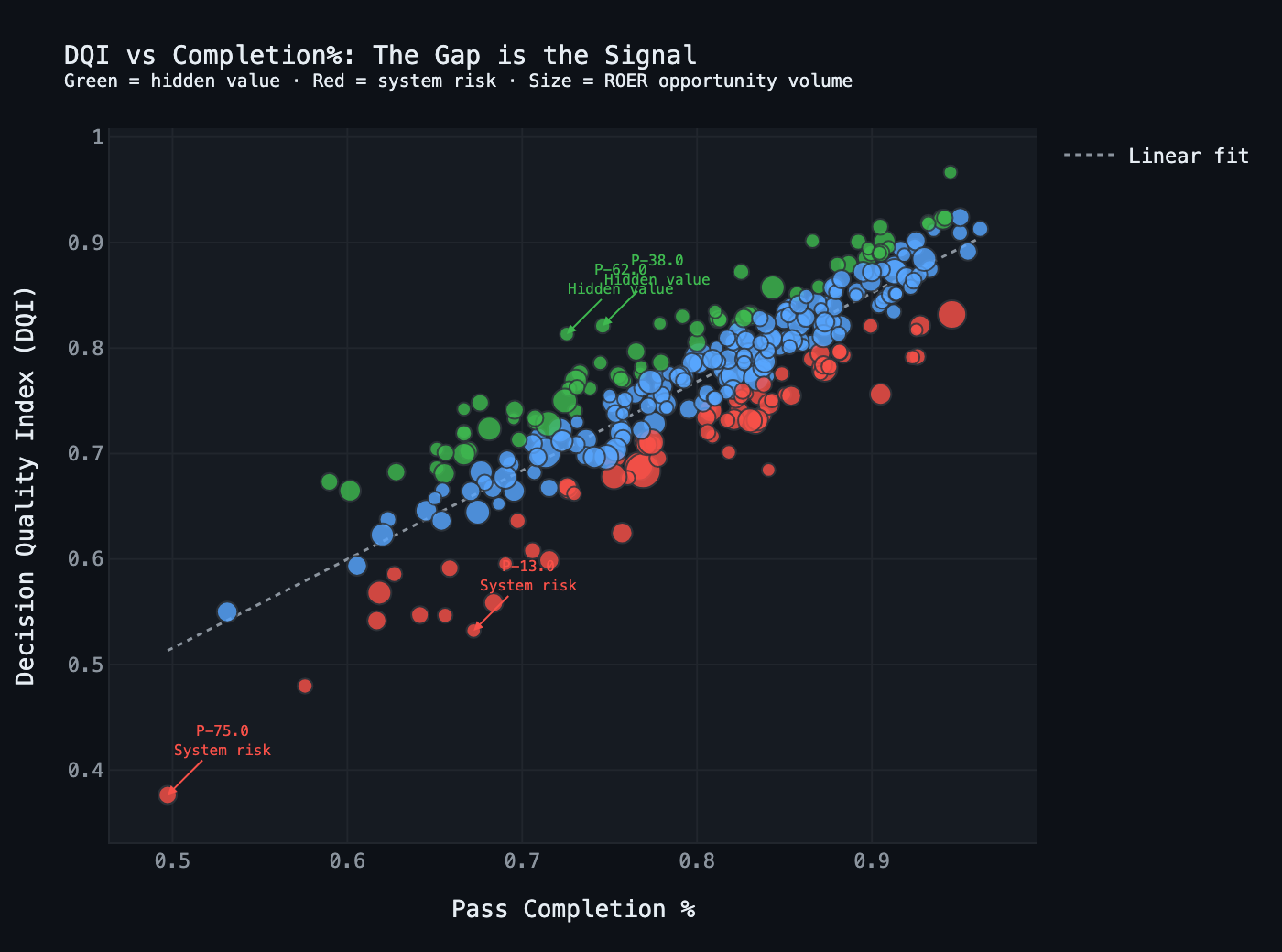

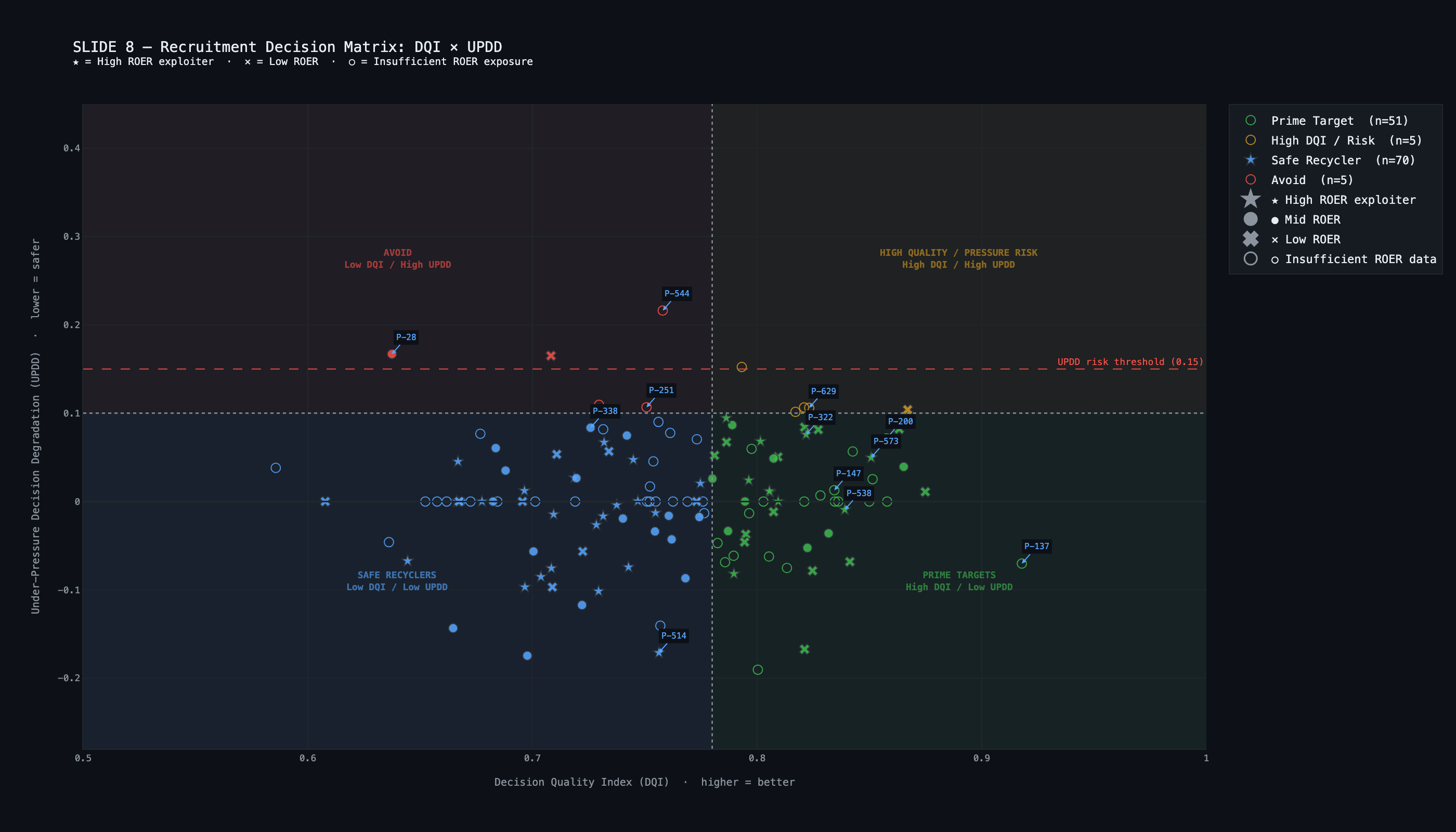

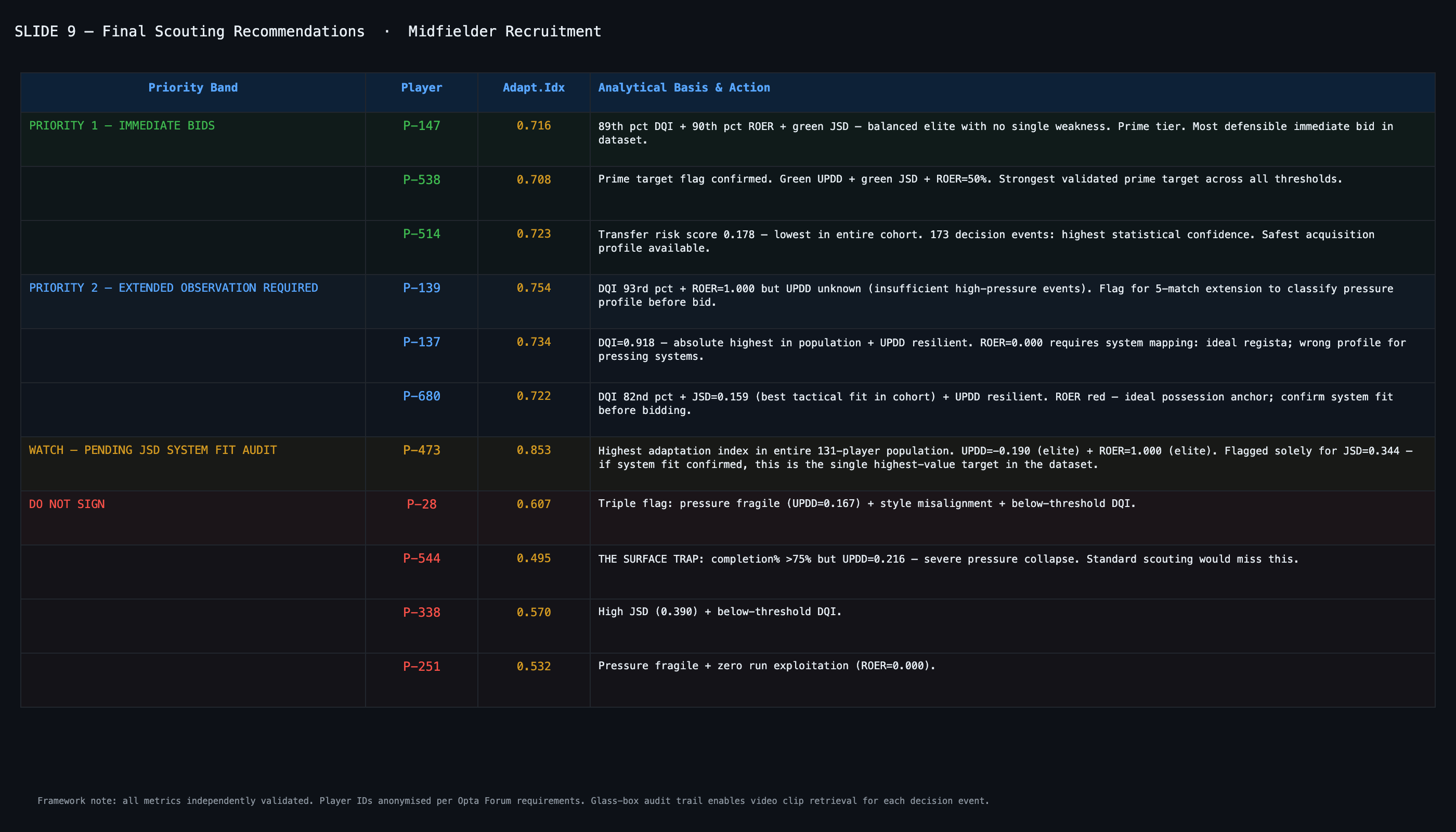

DQI — Primary metric

Decision Quality Index

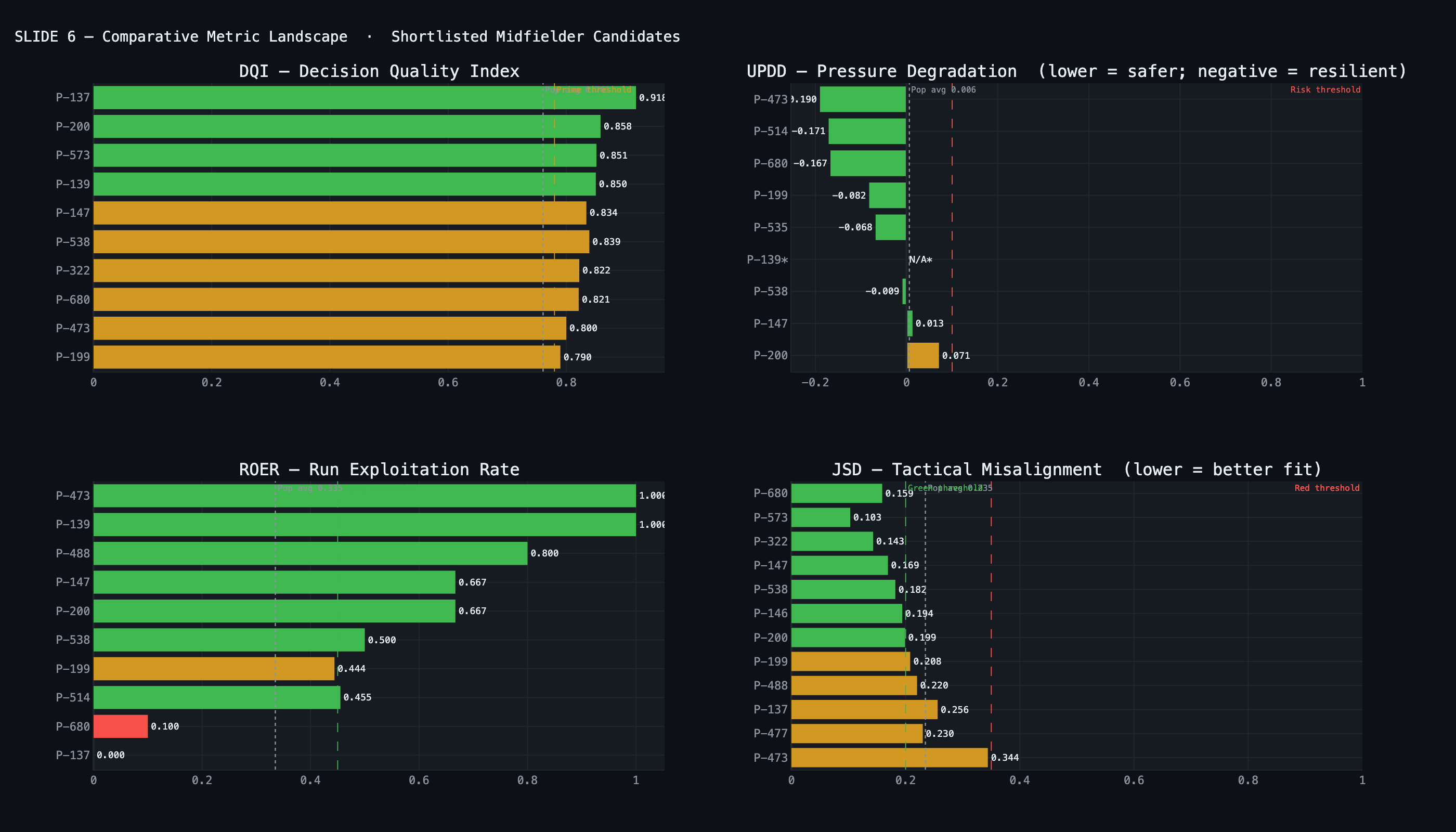

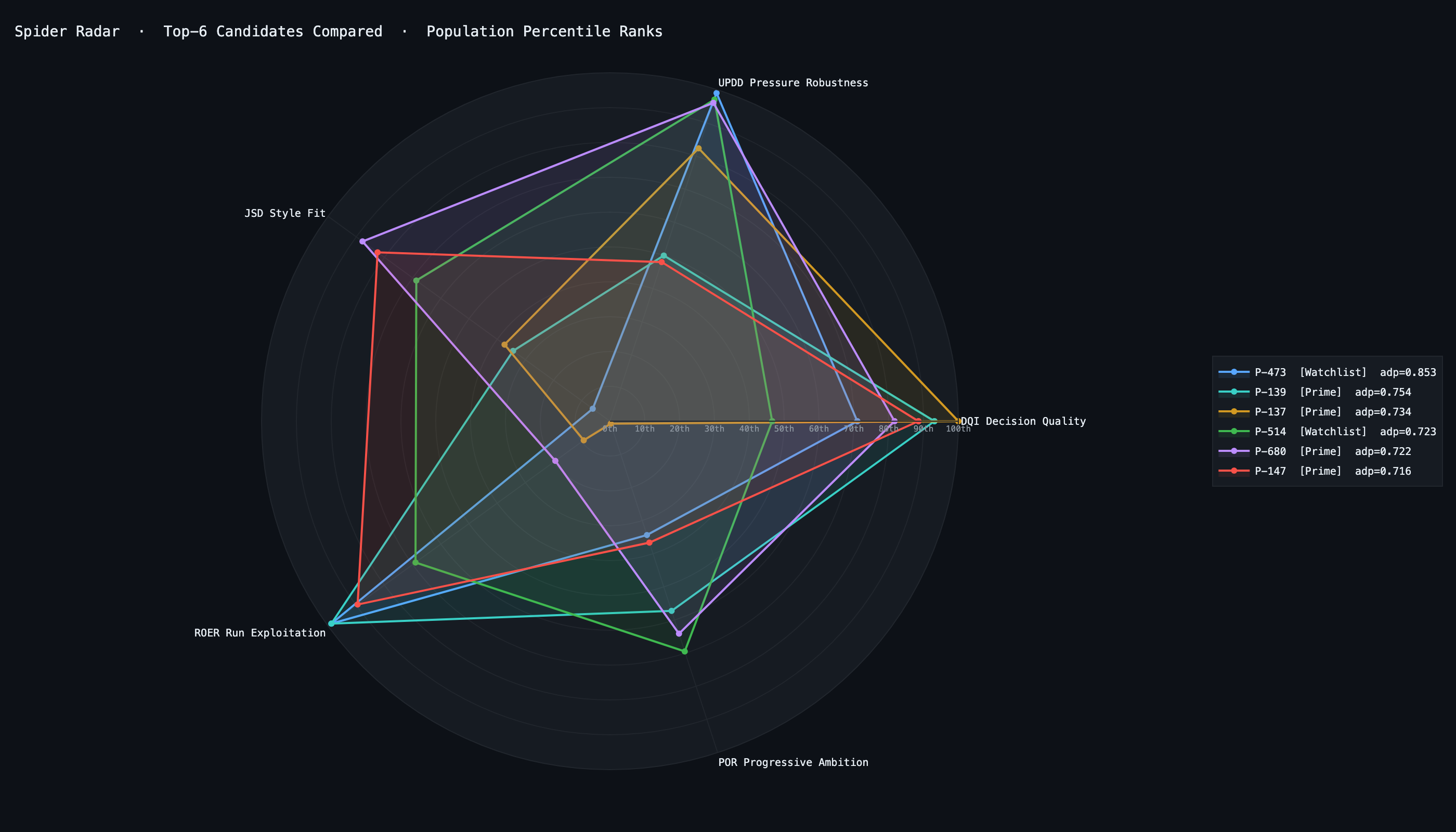

$$DQI_{regret} = 1 - \frac{OVS_{best} - OVS_{chosen}}{OVS_{best} - OVS_{min}}$$

Decision-rich events only: ≥2 options above OVS_COMPETITOR_TAU (removes reflex decisions). Range [0,1]; 1.0 = chose optimal. Softmax temperature T = 0.10.

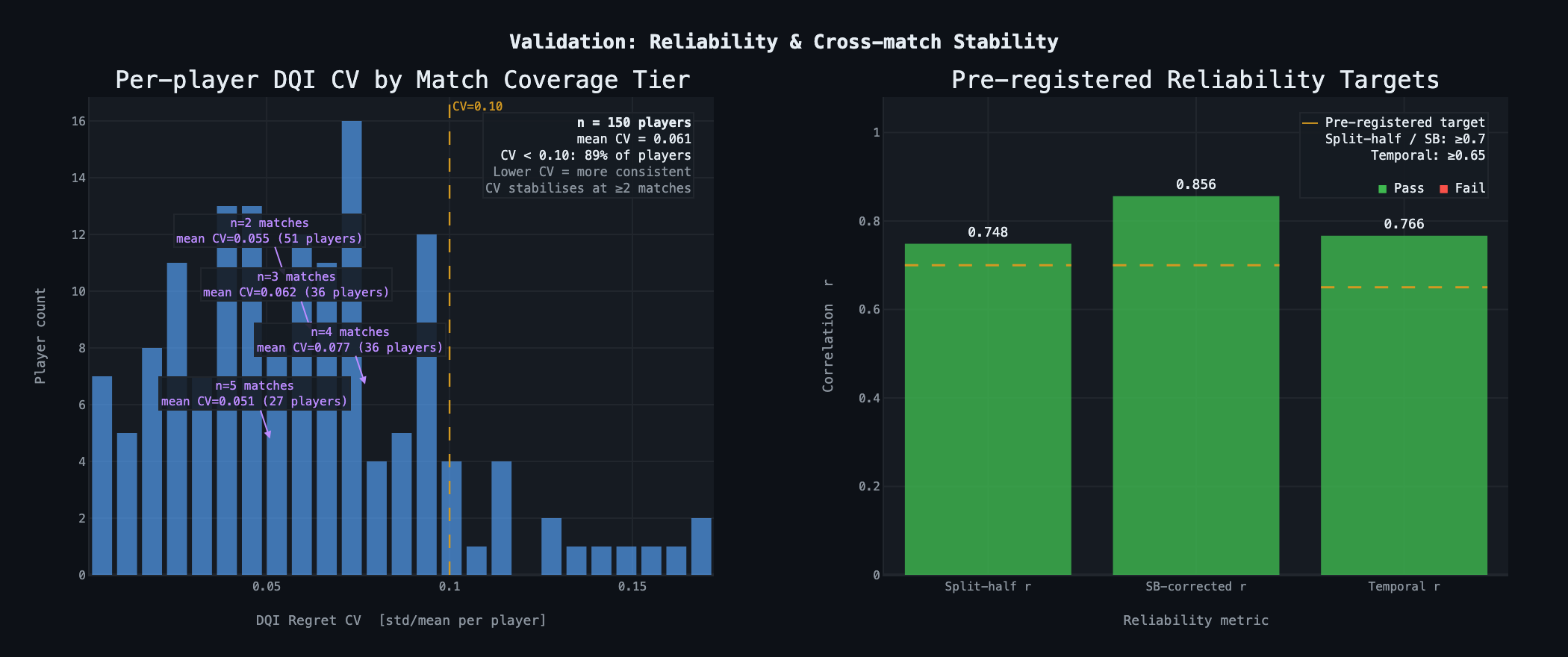

Split-half r = 0.748 / SB = 0.856 · Temporal r = 0.766 · External criterion: ρ(DQI, turnover_high_pressure) = −0.642, r_rb = −0.858. Dose-response Q1→Q4: 33.8% → 26.0% → 20.9% → 17.5%.

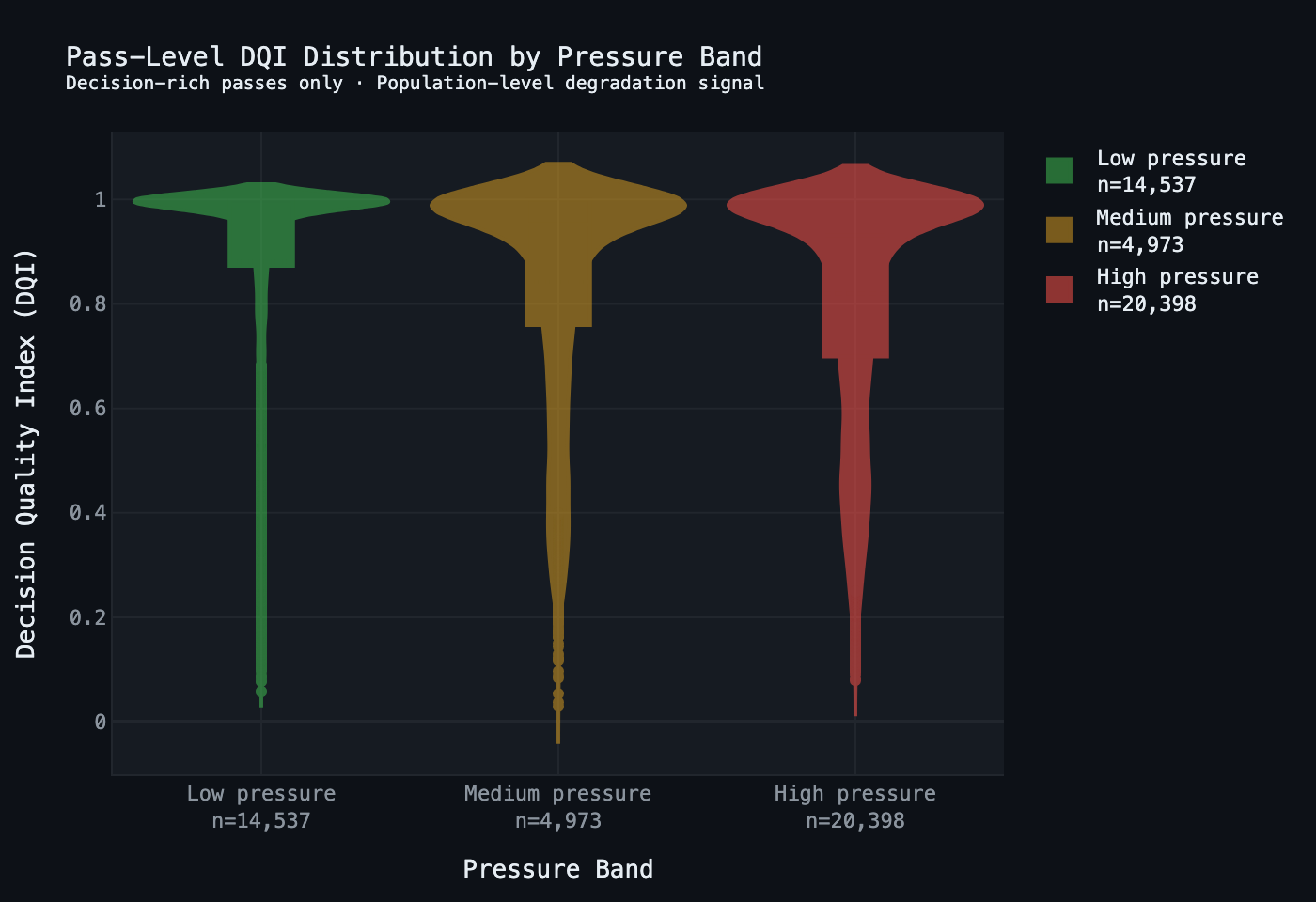

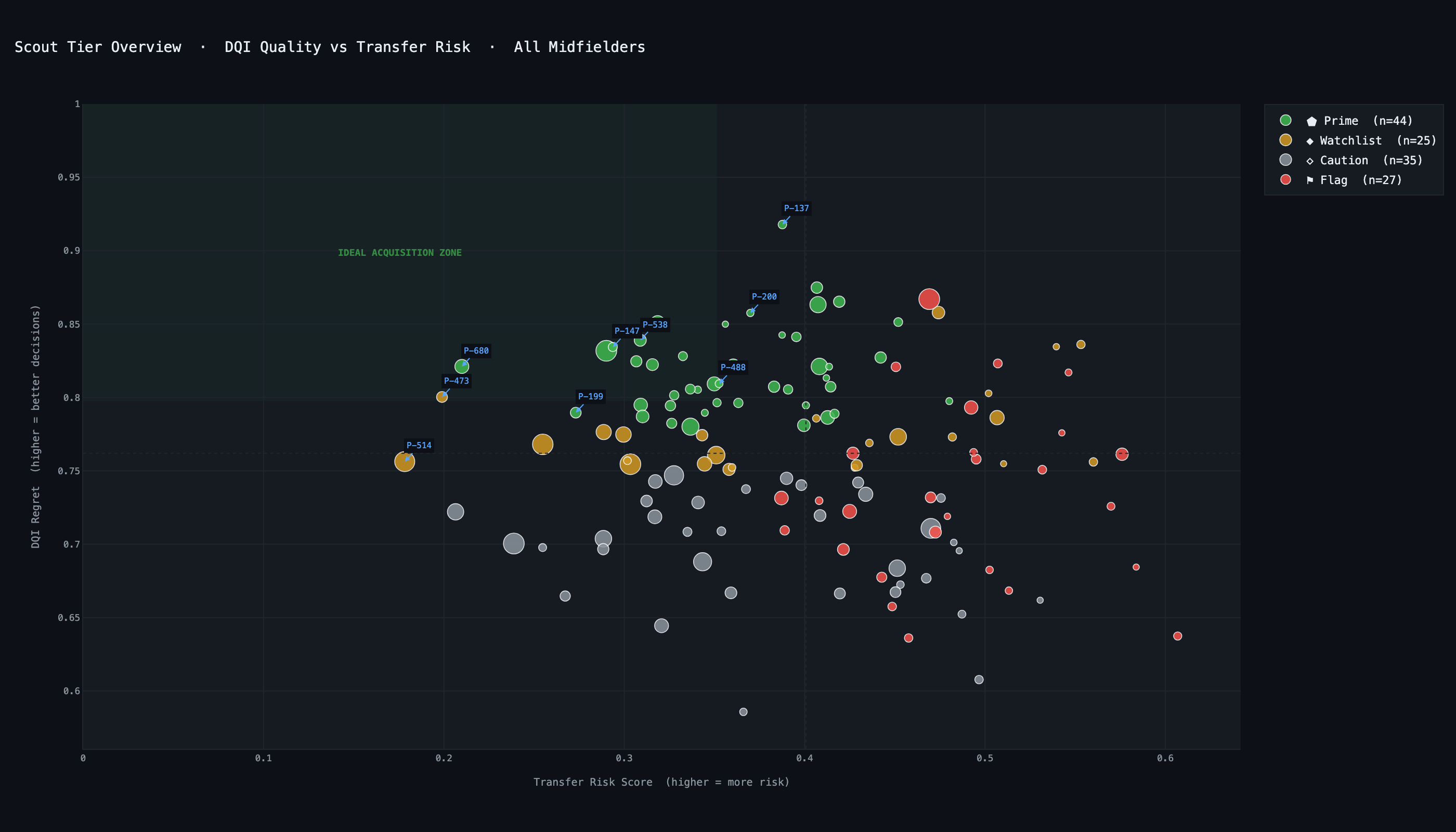

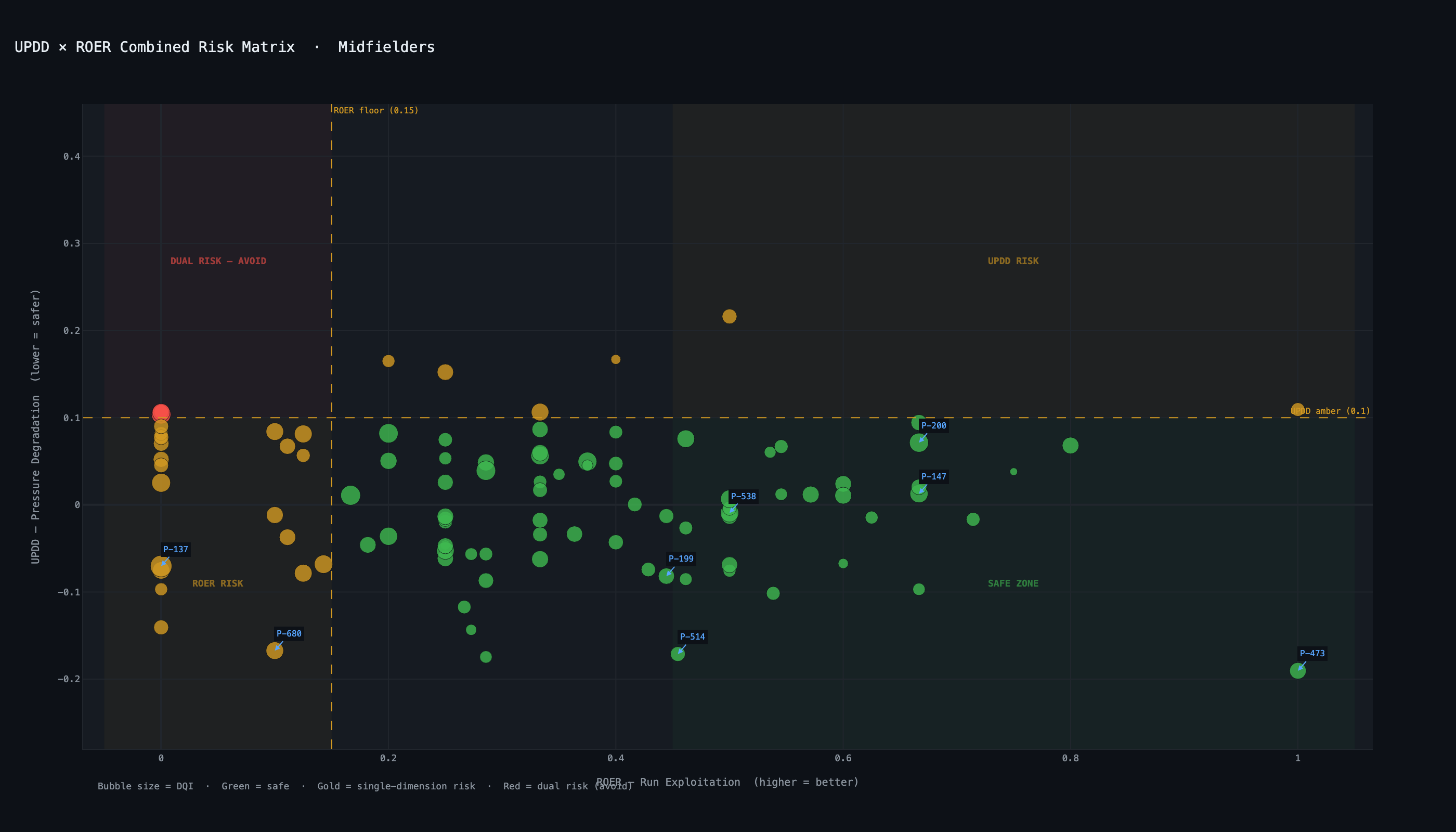

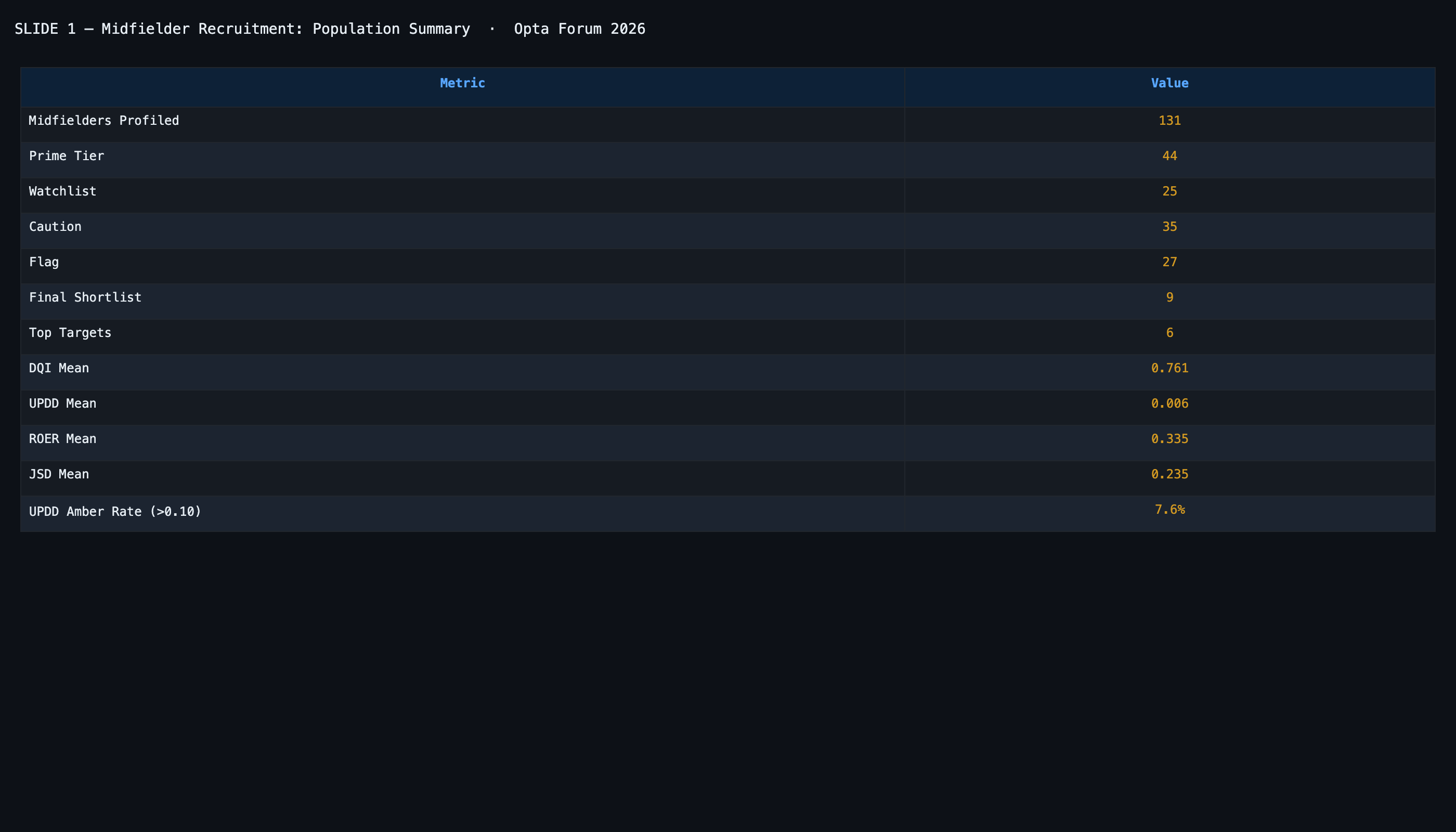

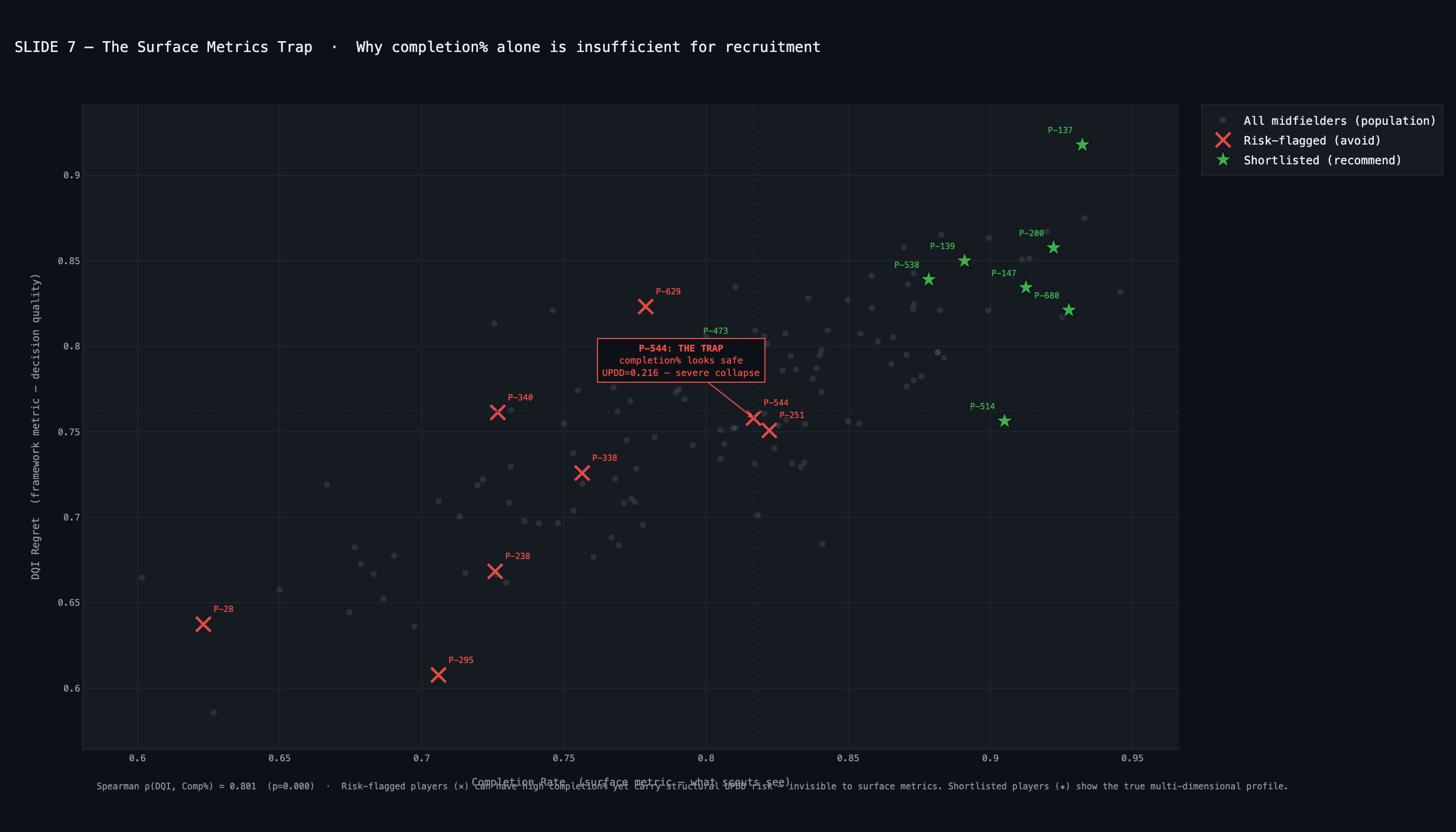

UPDD — Transfer risk signal

Under-Pressure Decision Degradation

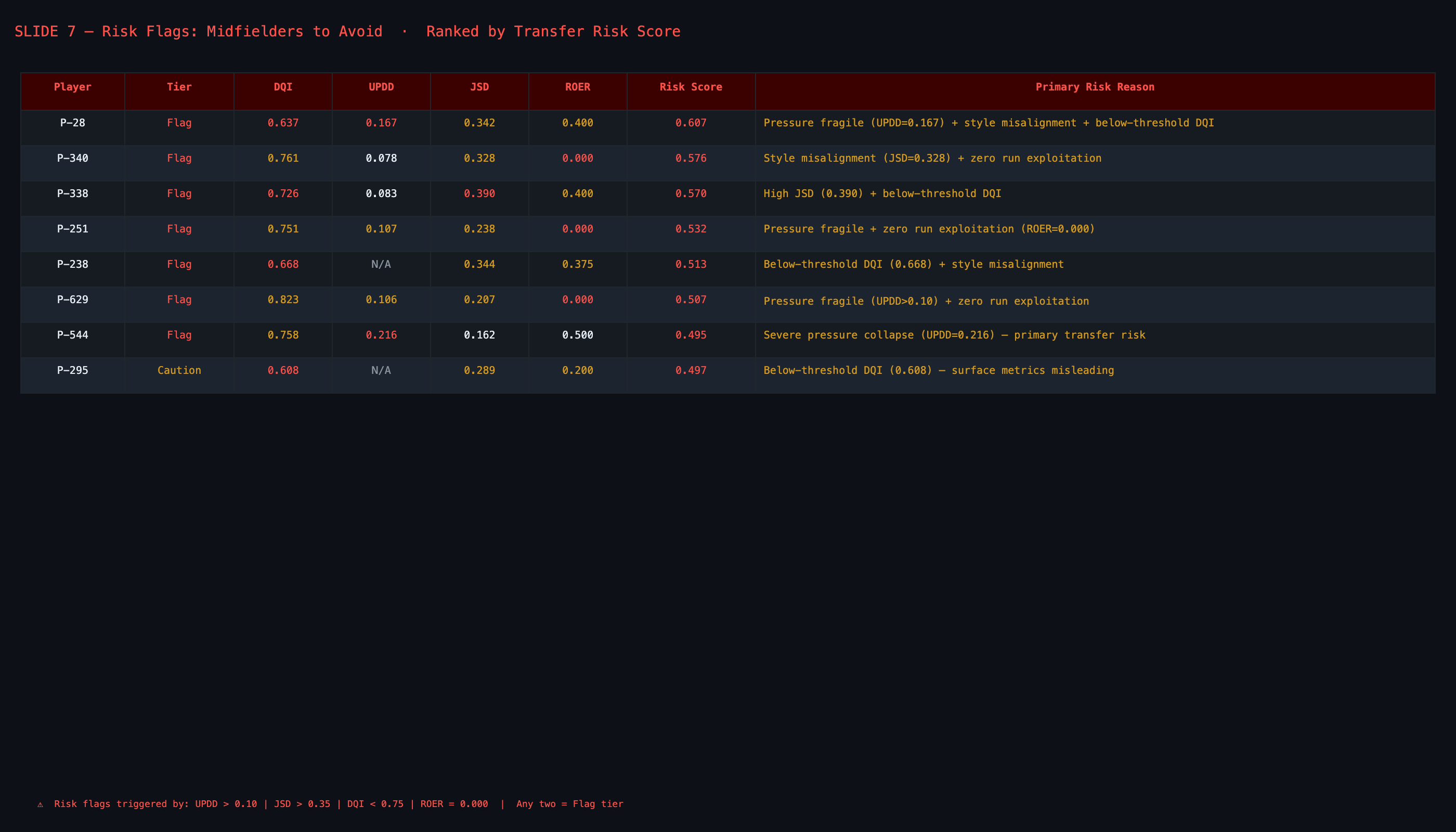

$$UPDD = \overline{DQI}_{low} - \overline{DQI}_{high}$$

Requires ≥15 decision-rich passes per pressure band. Medium-pressure excluded — extremes only. Positive = quality drops. Negative = player improves under pressure (pressure-resilient).

Tiers: Resilient (<0) · Neutral (0–0.10) · At-Risk (0.10–0.15) · High-Risk (>0.15). Unknown reported for 24.9% with insufficient band coverage — not imputed neutral. Cohen's d ≈ 0.556.

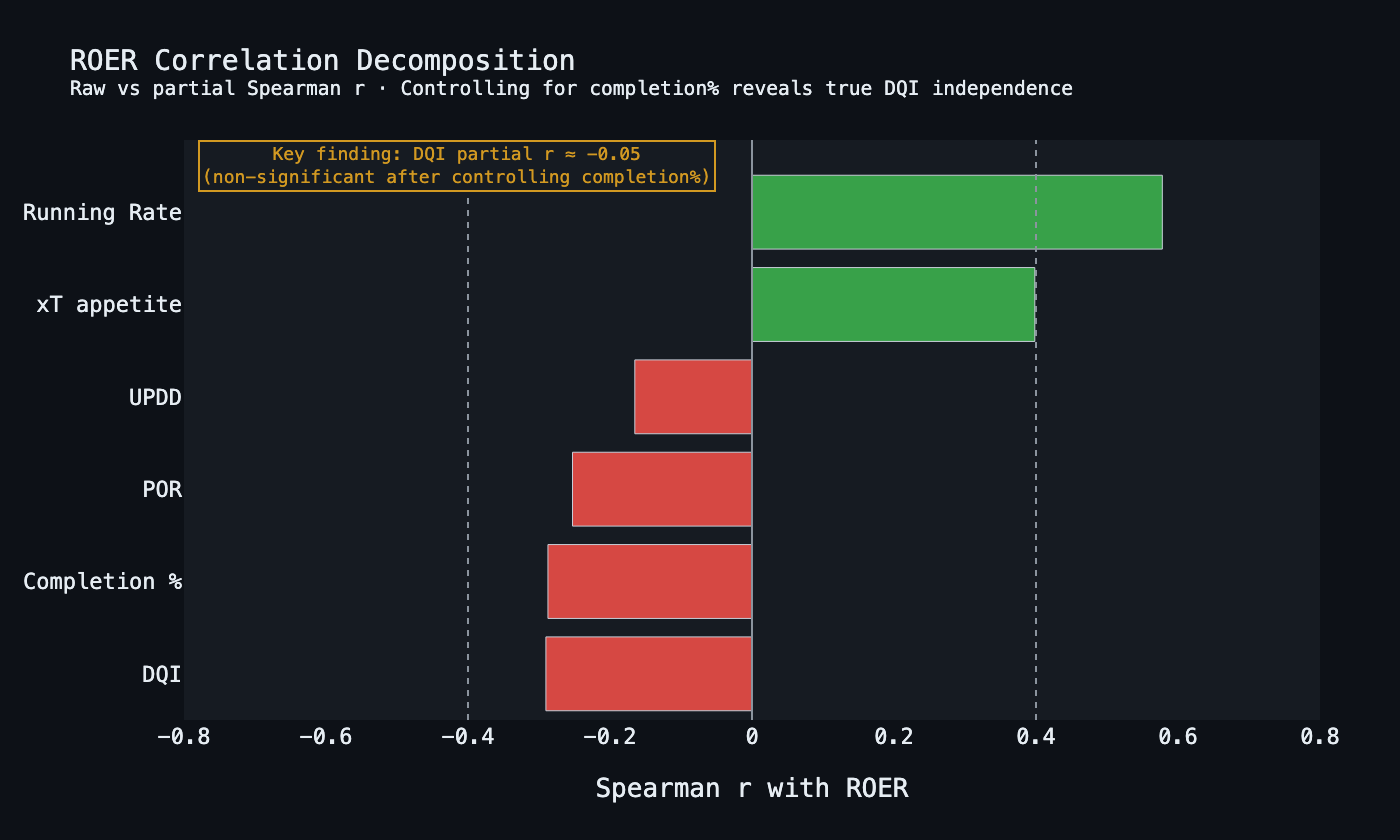

ROER — Movement awareness

Run-Option Exploitation Rate

$$ROER = \frac{\sum \mathbf{1}[\text{exploited} \mid \text{opportunity}]}{\sum \mathbf{1}[\text{opportunity}]}$$

Opportunity: ≥1 active run AND best running OVS > chosen OVS + τ (τ=0.02). Exploitation: chosen receiver had activeRun=True from passTargets.

τ removes trivial dilemmas. Positional gradient confirmed (K-W p<0.05): Def 0.226 → CMid 0.330 → Fwd 0.380–0.452. Position z-scoring mandatory. Partial r(DQI | completion%) = −0.052 — orthogonal after confounder removal.

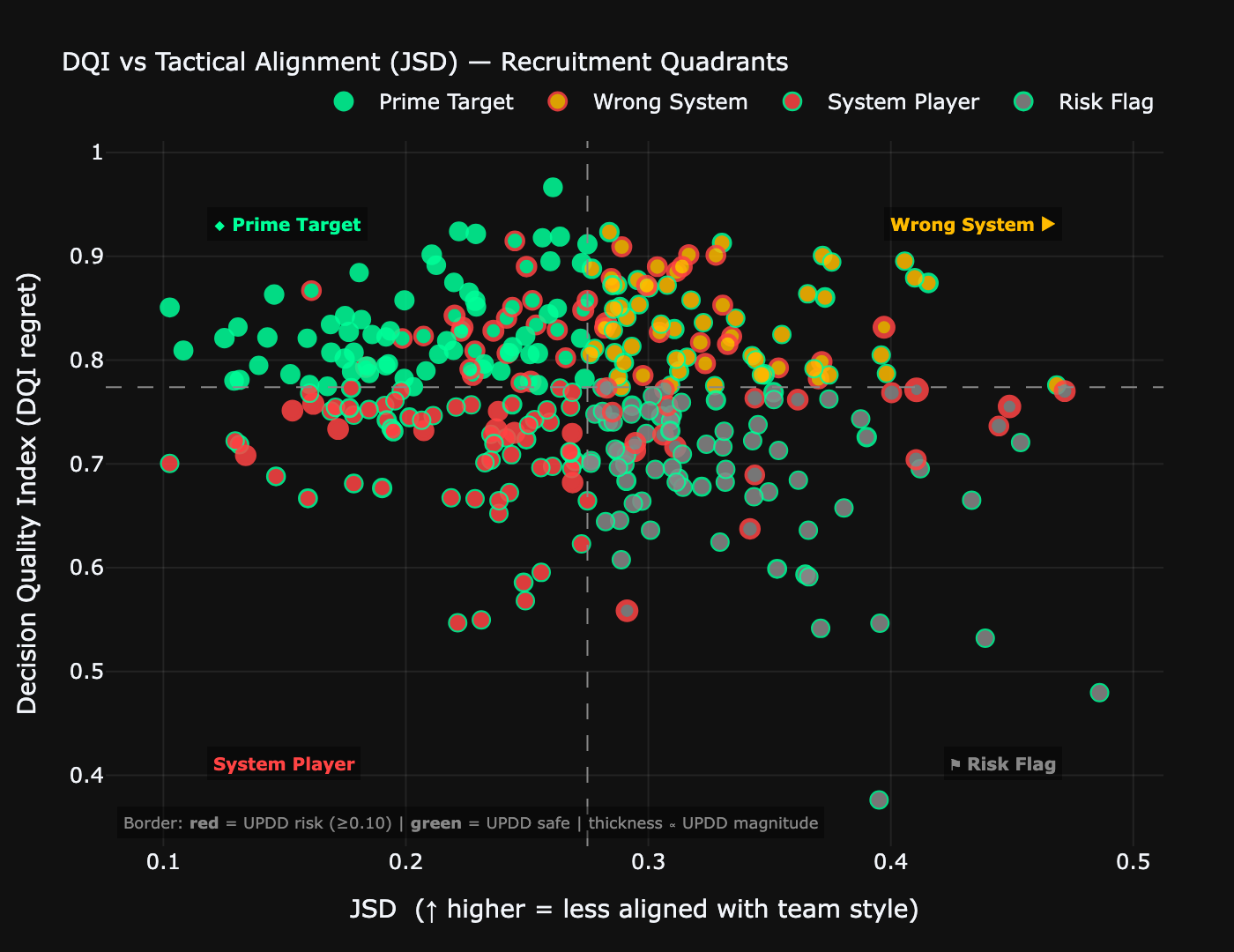

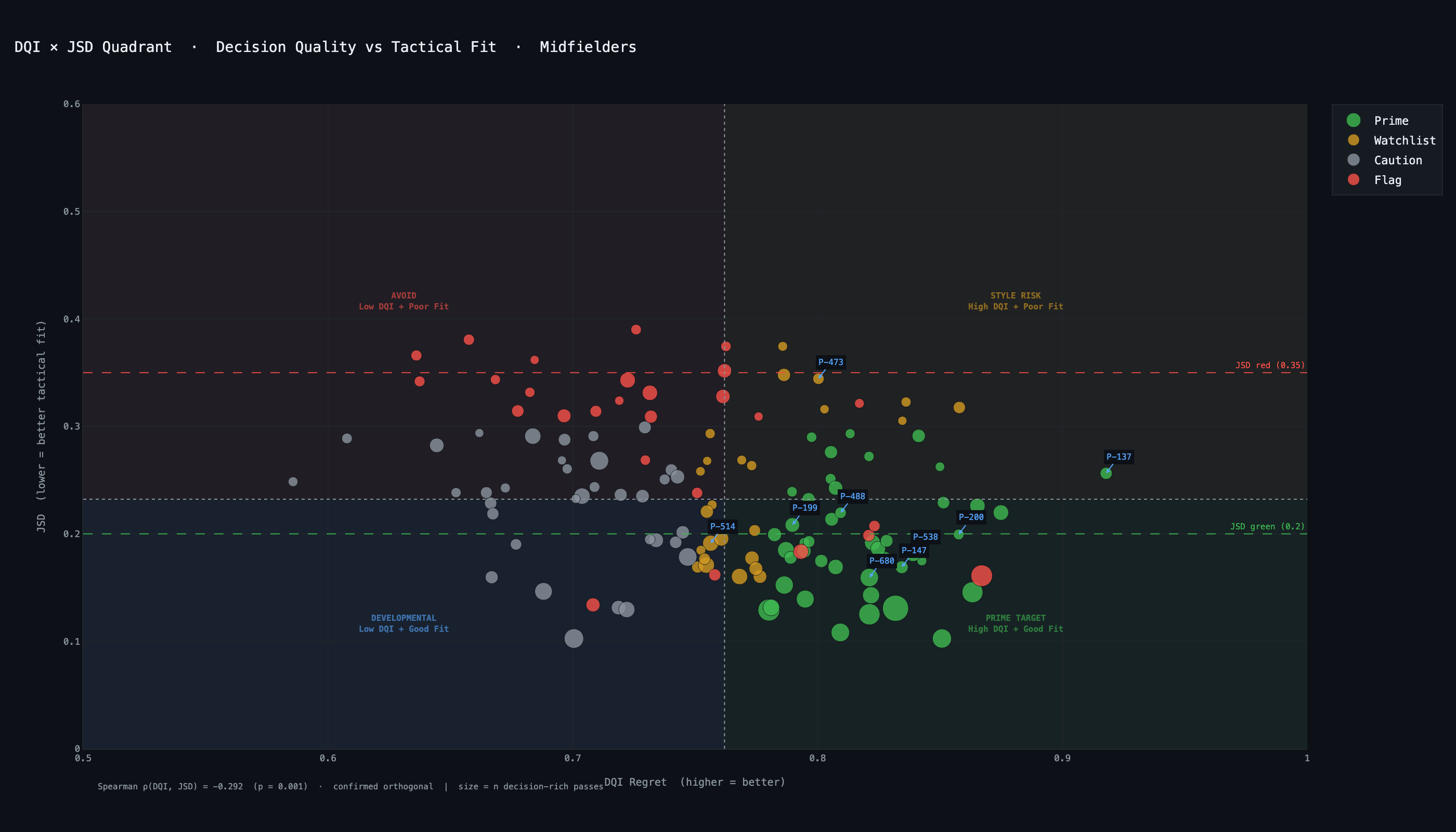

JSD — Tactical fit

Jensen-Shannon Divergence

$$JSD(P \| Q) = \frac{1}{2}D_{KL}(P \| M) + \frac{1}{2}D_{KL}(Q \| M)$$$$M = \frac{P+Q}{2}$$

P = player's phase-conditional passing distribution; Q = target system profile. JSD ∈ [0,1]; 0 = perfect alignment. Symmetric and bounded — advantages over raw KL divergence.

Orthogonal to DQI: |r| = 0.131 (target <0.40 ✓). C.Mids most aligned (0.218); wide fwds most divergent (0.310). Split-half r = 0.560 / SB = 0.718.

POR — Structural ambition

Progressive Override Rate

$$POR_z = z\!\left(\frac{\sum \mathbf{1}[\text{structural override}]}{\sum \mathbf{1}[\text{decision-rich}]}\right)$$

Three simultaneous conditions: (a) chose below-maximum OVS, (b) structurally ambitious (LBP ≥ 1 OR progressive gain > 0.65), (c) genuine dilemma existed. z-scored within position group.

Orthogonal to DQI: r = −0.036 (p = 0.91). Measures deliberate structural ambition — sacrificing expected value for a line-breaking pass when a genuine alternative existed.